Designing a web scraping proxy network platform for high availability requires a careful balance between scalability, resilience, performance, and anonymity. As organizations increasingly rely on real-time data extraction for competitive intelligence, pricing analytics, SEO tracking, and market research, downtime or detection can translate into lost revenue and missed opportunities. A robust proxy network infrastructure ensures that scraping operations remain uninterrupted, adaptive, and undetectable even under heavy loads or hostile anti-bot environments.

TL;DR: Building a highly available web scraping proxy network requires distributed infrastructure, intelligent IP rotation, load balancing, health monitoring, and automated failover systems. Redundancy across geographies and providers reduces downtime and detection risks. Smart request routing and real-time analytics improve efficiency and reliability. Security, scalability, and automation are the cornerstones of long-term success.

A modern proxy network platform is not just a list of IP addresses. It is a dynamic ecosystem combining infrastructure management, intelligent routing, monitoring systems, and automated response mechanisms. The goal is to keep data collection operations running smoothly even if individual nodes or entire regions fail.

Core Architecture of a High-Availability Proxy Network

High availability begins with architectural design. A proxy platform should be distributed across multiple layers to avoid single points of failure. The core components typically include:

- Proxy Node Pool – A diversified set of residential, datacenter, and mobile IPs.

- Load Balancers – Systems that distribute traffic across healthy proxy nodes.

- Health Monitoring Services – Continuous tracking of IP responsiveness and block rates.

- Control Plane – Centralized configuration management and orchestration.

- Rotation and Session Managers – Tools that determine when and how IPs rotate.

Distributing components across multiple cloud providers or hybrid environments ensures resilience. If one region goes down, traffic automatically reroutes through alternative nodes.

Redundancy and Geographic Distribution

Redundancy is the backbone of high availability. Designing for failure—rather than assuming uptime—is a best practice. Proxy networks should:

- Deploy IP pools across multiple data centers and geographic regions.

- Use multiple upstream providers to avoid dependency on a single vendor.

- Implement automatic failover mechanisms for routing.

- Maintain reserve IP capacity ready for scaling or replacements.

Geographic diversity also reduces detection risks. Many websites analyze traffic patterns by region. A limited regional footprint may trigger suspicion, whereas diversified locations appear more organic.

Load Balancing and Intelligent Traffic Routing

Load balancing ensures traffic is distributed efficiently among available proxy nodes. Without it, certain IPs become overloaded and blocked more quickly.

Modern load balancing strategies include:

- Round Robin – Sequential distribution across nodes.

- Least Connections – Routing toward the least busy proxy.

- Latency-Based Routing – Directing traffic through the fastest node.

- Reputation-Based Routing – Prioritizing IPs with lower block rates.

Reputation-based routing is particularly important in scraping contexts. Nodes are continuously scored based on response codes, CAPTCHAs, and block frequency. Intelligent systems dynamically adjust routing to maximize success rates.

Health Monitoring and Self-Healing Systems

For a proxy network platform to remain reliable, real-time monitoring is mandatory. Each IP should be continuously evaluated for:

- Response time

- HTTP status codes

- CAPTCHA challenges

- Connection failures

- Throughput limits

When thresholds are exceeded, automated systems can quarantine problematic IPs and replace them with fresh ones. This self-healing capability dramatically reduces manual intervention and downtime.

Key monitoring tools often include metrics dashboards, alerting systems, and automated remediation scripts that update routing tables in seconds.

IP Rotation and Session Control

IP rotation policies significantly impact availability and success rates. Overuse of a single IP accelerates bans, while excessive rotation may break sessions or trigger anti-bot defenses.

Best practices include:

- Sticky Sessions – Maintaining IP consistency per session when necessary.

- Time-Based Rotation – Switching IPs after predefined intervals.

- Request-Based Rotation – Rotating after a set number of requests.

- Adaptive Rotation – Dynamically changing based on detection signals.

Adaptive rotation represents the most advanced approach. By analyzing response patterns and anti-bot behavior, the system can anticipate blocks before they occur.

Scalability Through Automation

High availability must scale with demand. A scraping campaign may suddenly increase traffic volume tenfold. Manual scaling cannot keep up.

Automation enables:

- Auto-provisioning of new proxy nodes

- Automated configuration deployment

- Elastic bandwidth scaling

- Dynamic load redistribution

Infrastructure as Code frameworks and container orchestration platforms simplify scaling operations. When load spikes, new containers deploy instantly and join the proxy pool.

Security Considerations

Security planning protects both the platform and its users. A proxy network platform should include:

- Encrypted communication channels

- Access control and authentication layers

- Abuse detection systems

- Secure key management

Failure to implement proper safeguards may expose IP pools to abuse, damaging reputation and availability.

Handling Anti-Bot Countermeasures

Modern websites deploy advanced detection methods including fingerprinting, rate analysis, machine learning classification, and behavioral tracking. High availability depends not just on uptime but also on evading detection.

Strategies include:

- Rotating user agents and headers

- Introducing randomized request timing

- Emulating real browser behavior

- Integrating headless browser clusters

A proxy network platform may integrate directly with scraping engines to synchronize IP management with browser-level fingerprint adjustments.

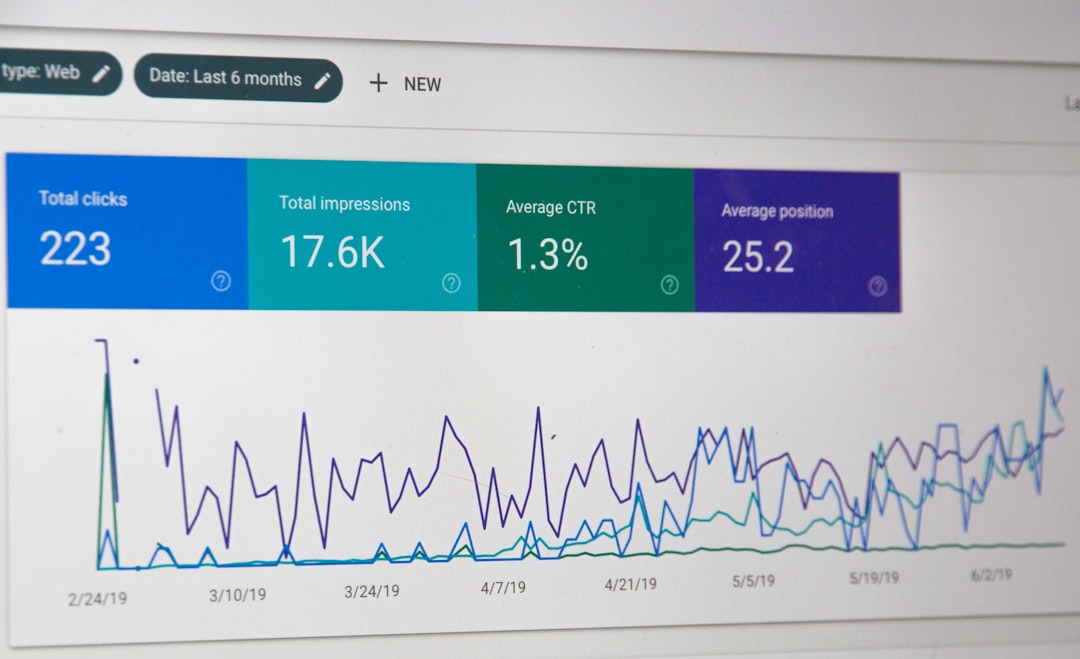

Data Analytics and Feedback Loops

Data-driven decision-making enhances availability. Logging and analytics tools should capture detailed performance data, including:

- Success-to-failure ratios

- Average response latency

- Block trends by region

- IP lifespan metrics

Feedback loops allow the platform to automatically refine rotation strategies and routing preferences. For example, if blocks increase in a certain country, traffic can be diverted elsewhere until reputation stabilizes.

Comparison of Proxy Types for High Availability

| Proxy Type | Reliability | Detection Risk | Cost | Best Use Case |

|---|---|---|---|---|

| Datacenter Proxies | High uptime | Higher detection | Low | High-volume scraping |

| Residential Proxies | Moderate | Low detection | Medium to High | Stealth scraping |

| Mobile Proxies | Variable | Very low detection | High | Targeting strict mobile platforms |

A well-designed network platform typically blends all three types to maximize both availability and stealth.

Disaster Recovery and Business Continuity Planning

No system is immune to catastrophic failure. Disaster recovery strategies must include:

- Data backups of routing configurations

- Multi-region failover playbooks

- Cold standby infrastructure

- Incident response protocols

Regular stress testing and simulated outages validate the platform’s ability to recover quickly.

Conclusion

Designing a web scraping proxy network platform for high availability is a multidimensional challenge. It demands distributed infrastructure, intelligent routing algorithms, real-time monitoring, automation, and adaptive countermeasure strategies. High availability is not achieved by simply adding more IP addresses; it requires a strategic, integrated approach combining redundancy, analytics, and resilience planning.

Organizations that invest in advanced monitoring, automated failover, and diversified proxy sourcing minimize downtime and maximize scraping efficiency. In a competitive data-driven landscape, a reliable proxy network is no longer optional—it is mission critical.

Frequently Asked Questions (FAQ)

1. What is high availability in a proxy network?

High availability refers to the ability of the proxy network to remain operational with minimal downtime, even during failures, heavy traffic loads, or block events.

2. Why is IP rotation important for web scraping?

IP rotation prevents overuse of a single address, reduces block risk, and distributes traffic patterns to appear more natural and human-like.

3. How can a proxy network automatically recover from failures?

Through health monitoring and automated failover mechanisms, unhealthy nodes are removed from rotation and replaced without manual intervention.

4. Which proxy type offers the best availability?

Datacenter proxies typically provide the highest uptime, but residential and mobile proxies offer better stealth. A hybrid approach delivers optimal results.

5. How does geographic distribution improve reliability?

It reduces dependency on a single region or provider and allows traffic rerouting if outages or detection events occur in specific locations.

6. What role does automation play in scaling?

Automation enables rapid provisioning of new proxy nodes, dynamic load balancing, and instant response to changing traffic demands.